MLflow: Built-in Scorers for LLM Evaluation Without Custom Logic

Plus version prompts like Git commits

Grab your coffee. Here are this week’s highlights.

📅 Today’s Picks

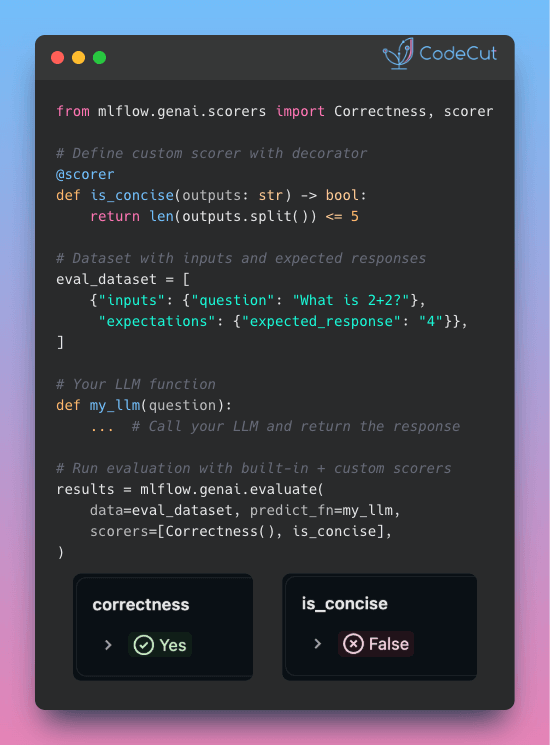

MLflow: Built-in Scorers for LLM Evaluation Without Custom Logic

Problem

Evaluating LLM outputs for correctness, relevance, and guideline adherence requires writing custom evaluation logic for each criterion.

This creates repetitive boilerplate code and slows down your iteration cycle.

Solution

MLflow provides pre-built scorers for common evaluation patterns like correctness and guideline adherence.

Key benefits:

Pre-built scorers for correctness, relevance, and safety checks

Simple decorator syntax for custom metrics

Automatic tracking of scores, rationales, and metadata in MLflow

Combine multiple scorers in a single evaluation run

Integrate with existing MLflow workflows without additional setup.

🧪 Run code | ⭐ View GitHub

🔄 Worth Revisiting

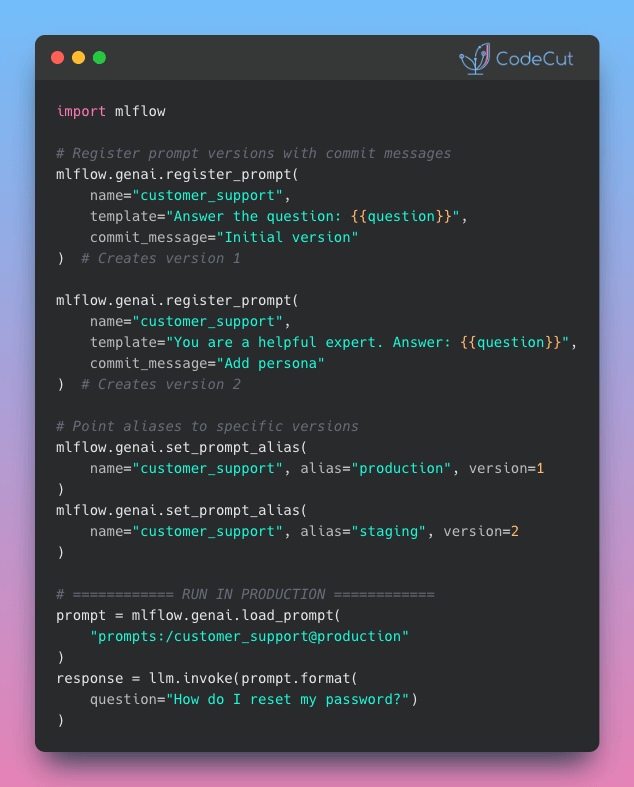

Swap AI Prompts Instantly with MLflow Prompt Registry

Problem

Finding the right prompt often takes experimentation: tweaking wording, adjusting tone, testing different instructions.

But with prompts hardcoded in your codebase, each test requires a code change and redeployment.

Solution

MLflow Prompt Registry solves this with aliases. Your code references an alias like “production” instead of a version number, so you can swap versions without changing it.

Here’s how it works:

Every prompt edit creates a new immutable version with a commit message

Register prompts once, then assign aliases to specific versions

Deploy to different environments by creating aliases like “staging” and “production”

Track full version history with metadata and tags for each prompt

📢 ANNOUNCEMENTS

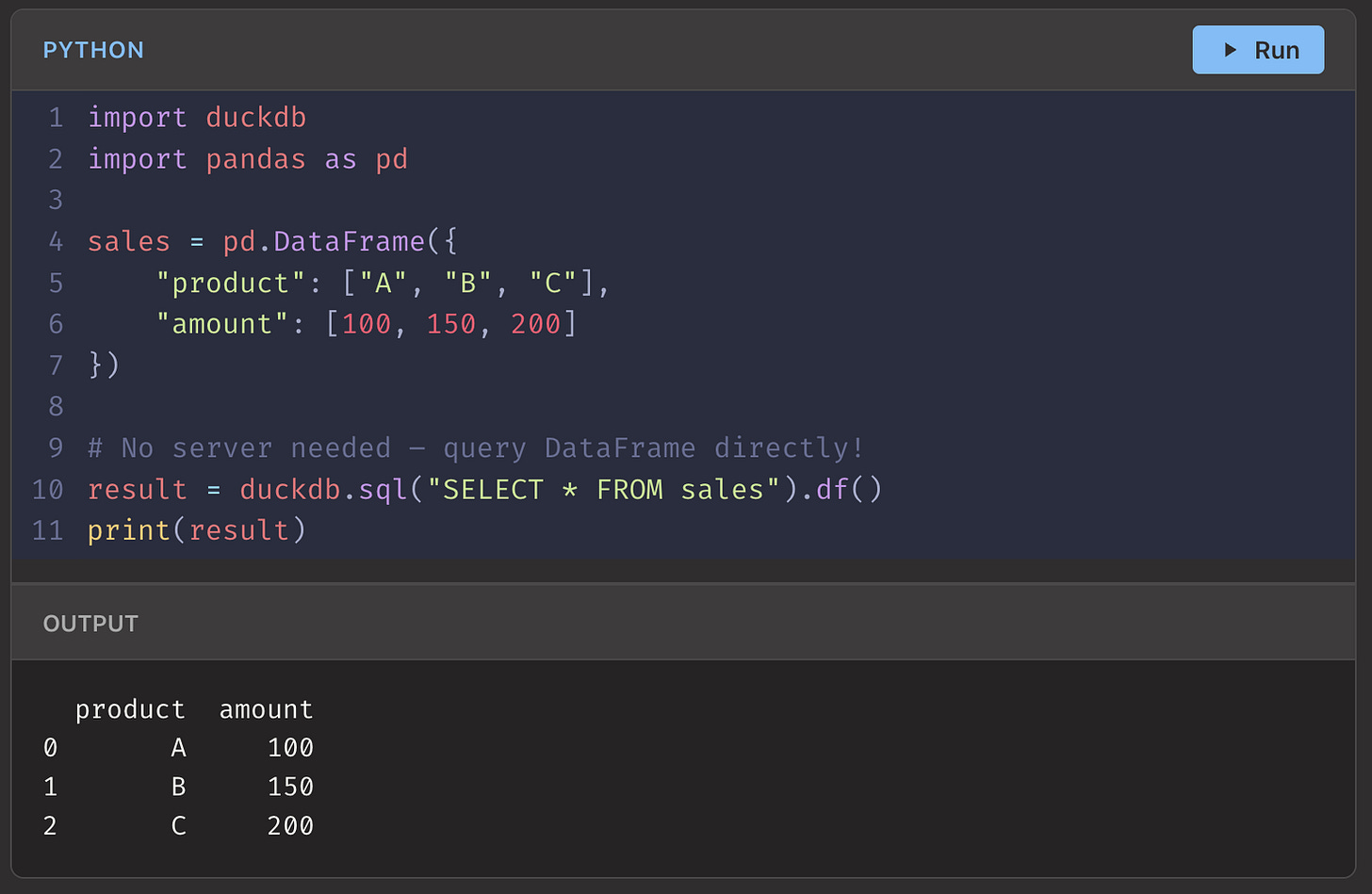

Introducing CodeCut Premium

I put a lot of effort into making every CodeCut blog clear, practical, and example-driven. Still, there’s a gap between reading code and actually writing it yourself.

CodeCut Premium bridges that gap with interactive courses that let you:

Execute code directly in your browser

Skip installation and environment setup

Test your understanding with built-in quizzes

Learn faster than sitting through long video courses

I plan to add new courses regularly, with a focus on quality and depth. The catalog is still growing, and Founding Members get early access plus exclusive perks as it expands.

Founding Members receive lifetime $12/month pricing, full access to all courses, and early influence on future content.

Founding pricing ends March 31, 2026.

☕️ Weekly Finds

zipline [Finance] - Pythonic algorithmic trading library with event-driven backtesting for building and testing trading strategies

outlines [LLM] - Structured text generation library that constrains LLM outputs to follow specific schemas, formats, and data types

responses [Testing] - Utility library for mocking out the Python Requests library in tests with simple decorators and context managers

📚 Latest Deep Dives

5 Python Tools for Structured LLM Outputs: A Practical Comparison - Compare 5 Python tools for structured LLM outputs. Learn when to use Instructor, PydanticAI, LangChain, Outlines, or Guidance for JSON extraction.

Before You Go

🔍 Explore More on CodeCut

Tool Selector - Discover 70+ Python tools for AI and data science

Production Ready Data Science - A practical book for taking projects from prototype to production

💬 Rate Your Experience

How would you rate your newsletter experience? Share your feedback →