Handle Messy Data with RapidFuzz Fuzzy Matching

Plus efficient LLM inference with vLLM

Grab your coffee. Here are this week’s highlights.

📅 Today’s Picks

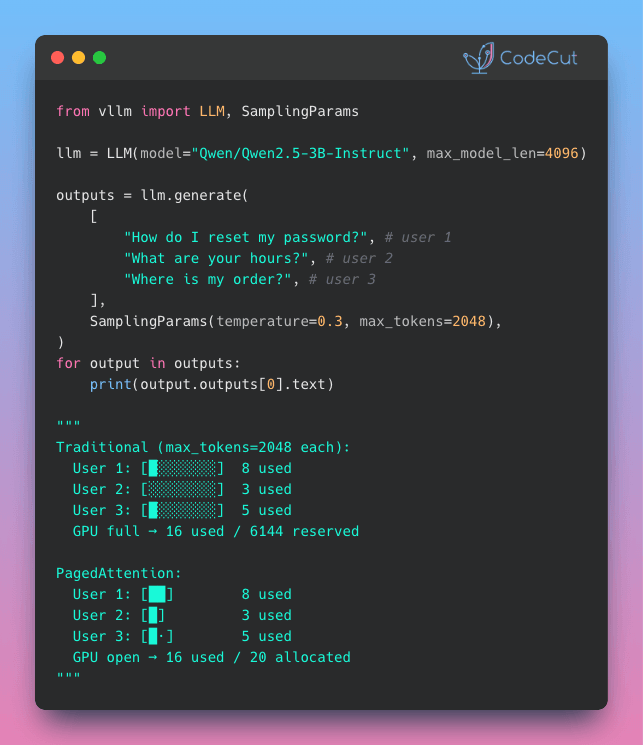

vLLM: Serve More LLM Users on the Same GPU

Problem

Most frameworks reserve GPU memory for the longest possible response, even when the actual output is short.

With multiple concurrent users, this quickly fills the GPU with reserved memory that is mostly never used.

Solution

vLLM’s PagedAttention allocates memory in small blocks as the response grows, instead of reserving everything up front.

This keeps memory usage efficient and allows the GPU to serve more requests.

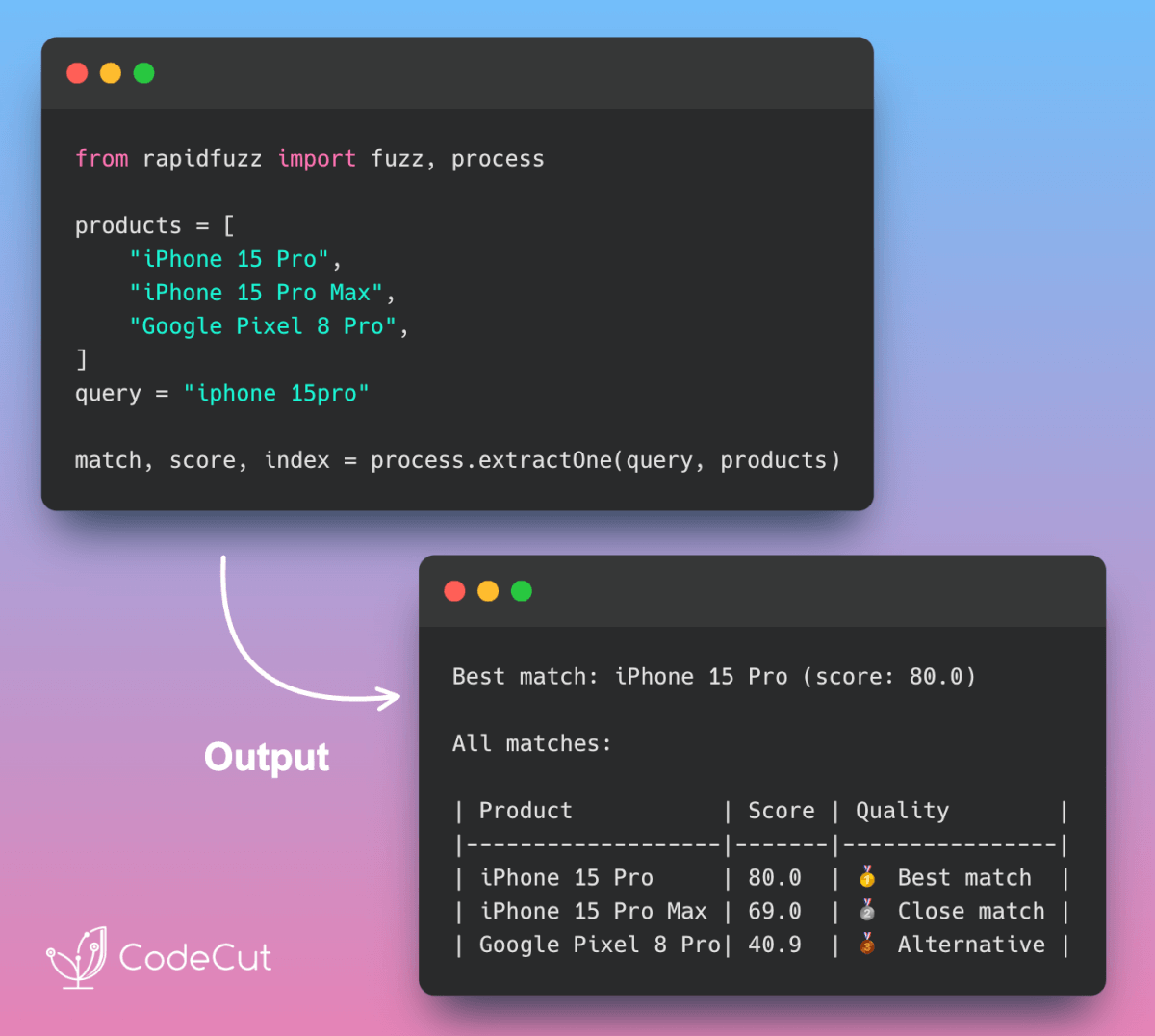

Handle Messy Data with RapidFuzz Fuzzy Matching

Problem

Traditional regex approaches require hours of preprocessing but still break with common data variations like missing spaces, typos, or inconsistent formatting.

Solution

RapidFuzz eliminates data cleaning overhead with intelligent fuzzy matching.

Key benefits:

Automatic handling of typos, spacing, and case variations

Production-ready C++ performance for large datasets

Full spectrum of fuzzy algorithms in one library

📖 View Full Article | 🧪 Run code

☕️ Weekly Finds

TextAttack [NLP] - Python framework for adversarial attacks, data augmentation, and model training in NLP

stanza [NLP] - Stanford NLP Python library for tokenization, sentence segmentation, NER, and parsing of 60+ languages

GLiNER2 [NLP] - Unified schema-based framework for entity extraction, text classification, and relation extraction in one pass

💬 Rate Your Experience

How would you rate your newsletter experience? Share your feedback →

🔍 Explore More on CodeCut

Tool Selector - Discover 70+ Python tools for AI and data science

Production Ready Data Science - A practical book for taking projects from prototype to production